Preliminary analysis of audio recordings

How to write an article for Tygodnik Polityka and not go crazy

In recent months, I have published two articles in Tygodnik Polityka, both based on months of analysis of materials I had previously collected. The first one concerned Tygodnika Trybuna and was based on the analysis of archival scans of that newspaper. The methodology was simple - I just needed to scan the archival issues at the National Library, perform OCR, and search the material for interesting keywords. The second article was about Radio AK Jutrzenka and was a much greater challenge, as it required analyzing tens of hours of recordings.

Until now, the organizations I knew that dealt with audio analysis did so largely manually, and the only way to speed up the work was by increasing the playback speed and listening at double speed. That’s why I am writing this article, to share my still quite simple and primitive method, which can, however, significantly speed up the work.

Capturing material

Radio2Podcast

In the case of my article, it was about a radio station broadcasting on FM and online. This was a perfect opportunity to test my script Radio2Podcast, which allowed me to archive 127 broadcasts (up to the time of publishing this text) without having to remember to turn on the recording in the public affairs slot every day. Of course, this is a very specific application, but it saved me a lot of hassle.

Why did I collect so much material? I wanted to write as comprehensive an article as possible and demonstrate a certain consistency in conspiracy narratives. This way, I could prove that it was not just an isolated incident but rather a continuity in demonizing the West, NATO, and the EU, while showing leniency towards Russia and China. At the same time, this meant 381 hours of audio to listen to—something that is manually difficult for one person to accomplish.

yt-dlp

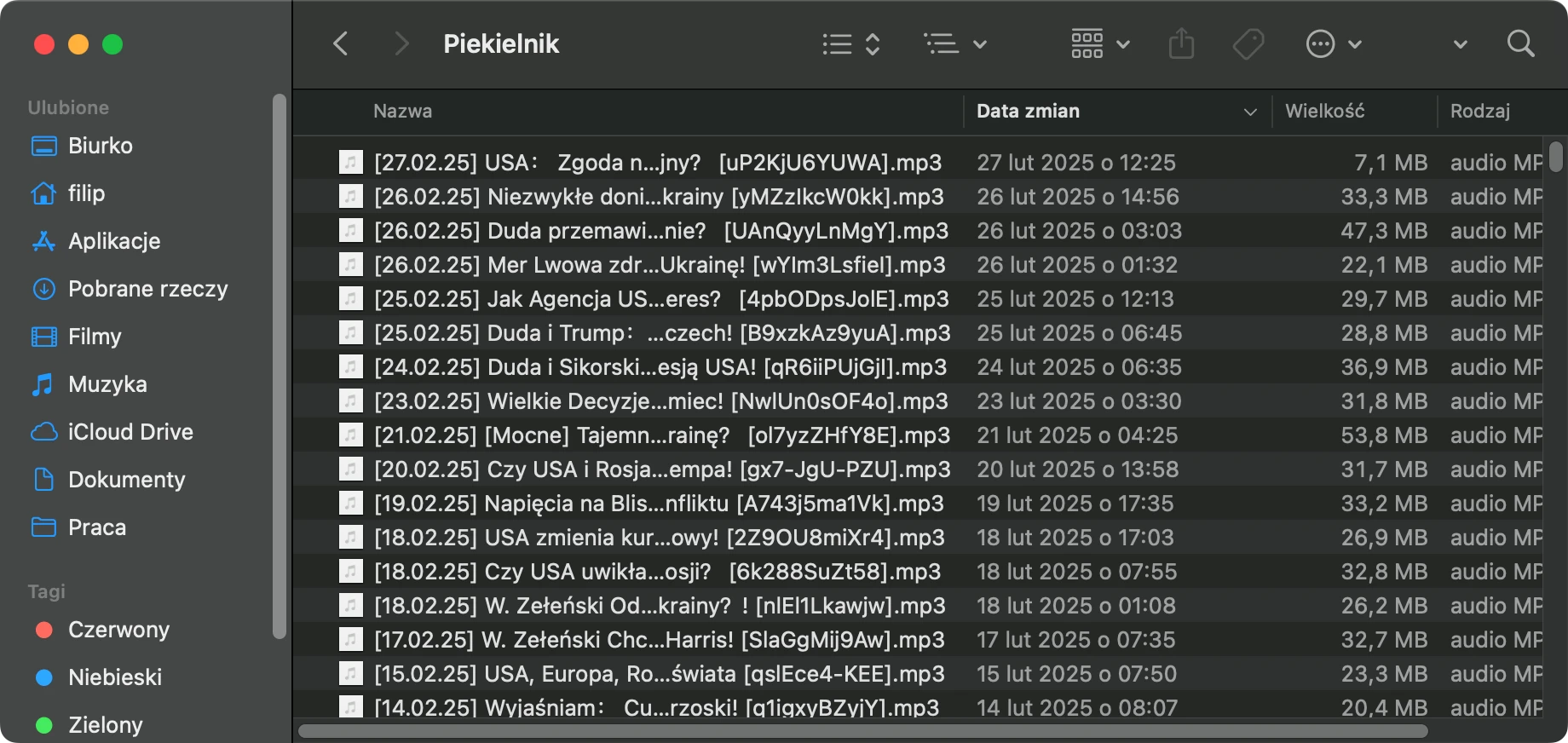

Of course, this is rather an exceptional application; traditional FM radio (as well as online radio) represents an interesting but relatively small slice of reality. An equally easy, important, and interesting application would be to analyze YouTube channels that gather a large audience. How to do this? You can use the tool yt-dlp, which with a single simple command

yt-dlp -x --audio-format mp3 --cookies-from-browser "chrome" "https://www.youtube.com/RadioJutrzenka"

can download all the videos from the channel of interest (and convert them to mp3 files).

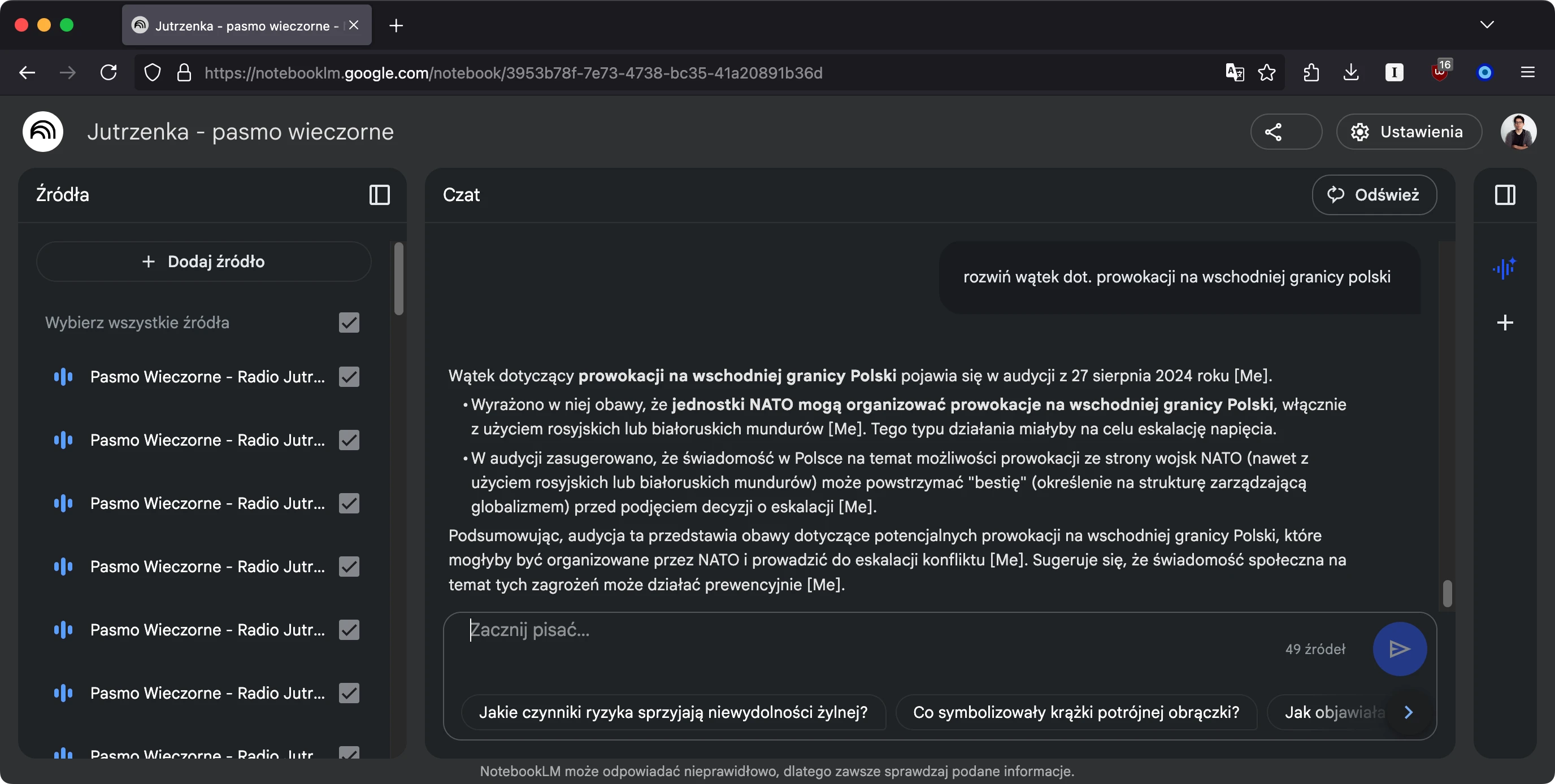

Working with audio

The final stage is analyzing the collected material. I used Google NotebookLM, a free AI tool that allows for the analysis of documents you upload. Fortunately, audio files are also supported, from which the tool generates ready-made transcriptions. Within a single project, you can attach 50 recordings or other documents and query the chat to search through them. Of course, every AI assertion needs to be verified because, like all these systems, they can experience hallucinations.

Of course, we cannot always work online, especially if our investigation concerns sensitive issues that we do not want to share with Google (and other corporations). In such cases, it will be necessary to perform the transcription locally using, for example, the free Whisper engine, and then run some AI locally. However, I do not have the technical capabilities to conduct a similar experiment. What is certain is that with the development of AI, alongside new problems, valuable and interesting solutions are also emerging that can significantly speed up analytical work. And let’s hold on to that positive thought.